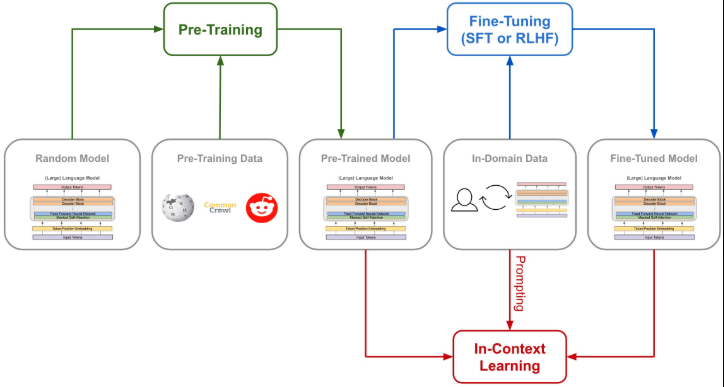

I implemented a GPT-style decoder-only transformer in PyTorch from scratch, including multi-head causal self-attention, Rotary Position Embeddings (RoPE), SwiGLU feed-forward layers, and RMSNorm. The goal was to pretrain the model on web text and then fine-tune it for conversational responses using supervised fine-tuning (SFT) with selective loss masking.

Model Architecture

The model uses token embeddings only (no separate positional embedding layer). Position is encoded in the attention mechanism via RoPE, which rotates query and key vectors as a function of position and improves length generalization. Each transformer block consists of multi-head causal self-attention with RoPE, followed by a feed-forward network using the SwiGLU gated activation (main path times Swish of gate path). Layer normalization is RMSNorm. The decoder is autoregressive with a causal mask so that each token can attend only to previous tokens.

Pretraining Pipeline

I built a scalable pretraining pipeline over the FineWeb-Edu dataset using Hugging Face Arrow datasets. Custom GPT dataset and data loader utilities support mixed precision (FP16 or bfloat16) and gradient accumulation for large effective batch sizes. Training uses a cosine learning rate schedule with warmup and gradient clipping. The pretrained model is trained with next-token prediction loss on contiguous text.

Supervised Fine-Tuning for Conversation

For conversational ability, I designed an SFT framework that formats dialogs with special tokens for user, assistant, and system roles. Only assistant responses are used for the loss (labels for user and system turns are masked with -100). The model is fine-tuned on a conversation dataset (e.g., SmolTalk) so that it learns to generate coherent, on-role replies. Evaluation is done on a held-out set of multiple-choice questions across topics to measure knowledge and reasoning after SFT.

All core components (embedding, attention with RoPE, SwiGLU, transformer block, full model, pretraining and SFT scripts) are implemented and tested with unit tests and debug notebooks. Code is available on GitHub.