I architected a cardiac anomaly detection system that uses deep learning to classify heart sounds from digital stethoscope audio. The project applies machine learning to mechanical engineering and healthcare problems by turning raw auscultation signals into automated, interpretable predictions. The system was trained and evaluated on audio from over 5,000 stethoscope recordings.

Data and Preprocessing

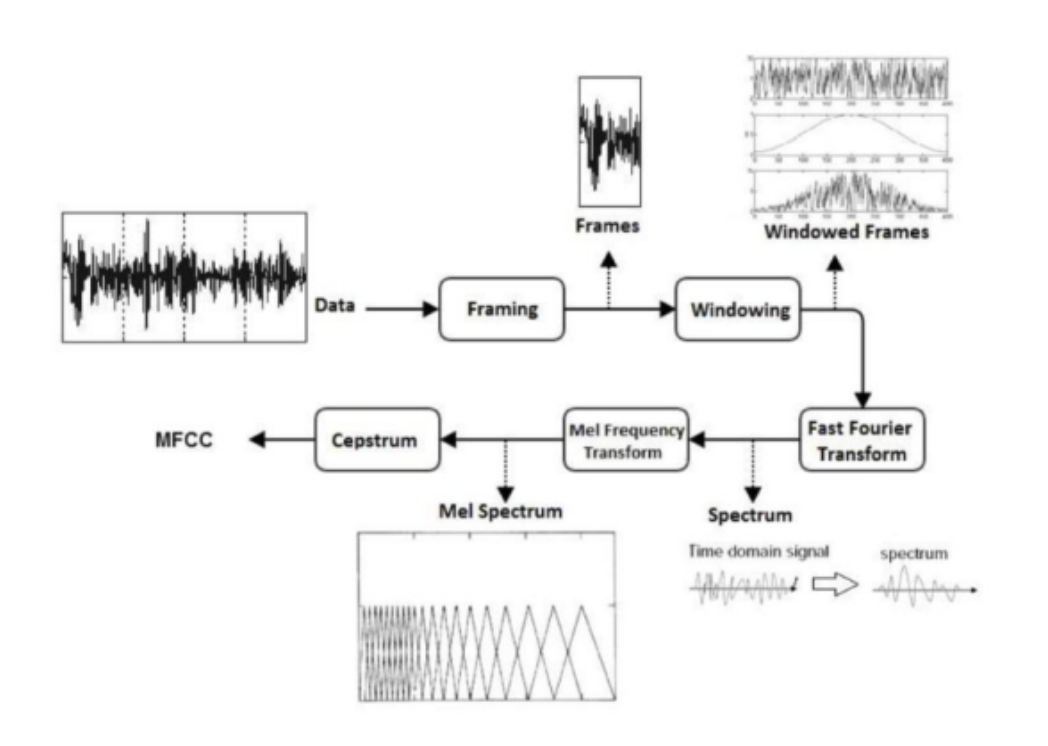

Heart sound recordings were collected from digital stethoscopes and preprocessed for model input. I applied spectrogram representations and Mel Frequency Cepstral Coefficients (MFCCs) for feature extraction so that both time-frequency structure and perceptual spectral features were available to the model. This preprocessing step was critical for capturing the discriminative information in heart sounds while controlling dimensionality.

Model and Training

I used convolutional neural networks to learn from the extracted features and classify segments into normal or anomalous categories. The CNN architecture was designed to capture local patterns in the spectrogram or MFCC maps and to scale to the size of the dataset. Training was conducted with standard practices for data splitting, validation, and regularization to avoid overfitting.

Results

The system achieved 82.08% accuracy on the heart sound classification task, demonstrating that automated cardiac anomaly detection from stethoscope audio is feasible with deep learning. The work contributes to the broader goal of low-cost, accessible screening tools that can support clinicians and reduce reliance on specialized equipment in resource-constrained settings.

This project was completed as part of my Master's thesis at IIT Kharagpur under the guidance of Prof. Aditya Bandhopadyay, with a focus on machine learning applications to mechanical engineering and biomedical problems.